The Unseen Architects: Decoding the Historical Roots of AI Ethics

Quick Summary

The Unseen Architects: Decoding the Historical Roots of AI Ethics The rapid evolution of artificial intelligence (AI) is transforming every facet of our lives, from healthcare to...

In This Article

The Unseen Architects: Decoding the Historical Roots of AI Ethics

The rapid evolution of artificial intelligence (AI) is transforming every facet of our lives, from healthcare to entertainment, raising profound ethical questions about bias, privacy, and accountability. But while discussions around AI ethics often feel like a recent phenomenon, stemming from the breakthroughs of the past decade, the philosophical and societal dilemmas they present are far from new. Understanding the historical roots of these concerns is not just an academic exercise; it's essential for navigating the complex ethical landscape of today's AI and for building a more responsible future. The blueprint for ethical AI wasn't drawn yesterday; its lines can be traced back through centuries of human endeavor, revealing the "unseen architects" who shaped our understanding of technology's moral obligations long before computers even existed.

From Golems to Frankenstein: Early Warnings About Artificial Life

The anxieties surrounding intelligent machines, their autonomy, and their potential to harm humanity are deeply embedded in our cultural narratives, predating the digital age by centuries. These early narratives, often dismissed as mere myth or science fiction, served as crucial moral laboratories, exploring the very questions we grapple with today. One of the earliest and most enduring examples is the legend of the Golem. Originating in medieval Jewish folklore, particularly prominent in 16th-century Prague with Rabbi Judah Loew ben Bezalel, the Golem was an anthropomorphic being created from clay and brought to life through mystical incantations, often to protect a Jewish community from persecution. However, the narratives consistently depict the Golem as powerful but uncontrollable, often turning against its creator or wreaking havoc due to its literal interpretation of commands and lack of human judgment. This ancient tale starkly illustrates core AI ethical dilemmas: the creator's responsibility for their creation, the dangers of unchecked power, and the challenge of imbuing artificial life with nuanced morality. The Golem's inability to distinguish good from bad without explicit, flawless programming echoes modern concerns about AI algorithms that, without careful ethical training data, can propagate biases or make morally ambiguous decisions.

Centuries later, Mary Shelley’s 1818 novel, Frankenstein; or, The Modern Prometheus, provided a foundational text for modern technological ethics. Dr. Victor Frankenstein, driven by scientific ambition, creates a sentient being, only to abandon it due to its monstrous appearance. The Creature, though initially benevolent, becomes vengeful after experiencing societal rejection and isolation. Shelley's masterpiece isn't just a horror story; it's a profound meditation on the ethics of creation, the responsibility of the creator for their invention's well-being, and the societal impact of technological innovation. It interrogates the very definition of humanity and highlights the dangers of developing powerful technologies without considering their social and emotional ramifications. The Creature’s suffering, a direct consequence of Frankenstein’s neglect, resonates with contemporary debates about the potential for AI to exacerbate societal inequalities or to be misused without adequate safeguards. For instance, the use of facial recognition AI in policing, developed without sufficient public input or understanding of its potential for racial bias (as highlighted by studies from the National Institute of Standards and Technology, NIST, showing higher false positive rates for certain demographic groups), mirrors the unintended negative consequences of Frankenstein’s creation. Both the Golem and Frankenstein's Monster serve as stark historical warnings against technological hubris and the ethical void that can arise when innovation outpaces moral foresight.

Asimov's Laws and Cybernetics: Defining AI Ethics Before AI Was Mainstream

While the Golem and Frankenstein explored general themes of creation and responsibility, the mid-20th century saw the emergence of more specific frameworks for governing artificial intelligence, even before the widespread adoption of computers. Isaac Asimov, a prolific science fiction writer, played a pivotal role in shaping public discourse around AI ethics through his "Three Laws of Robotics," first explicitly stated in his 1942 short story "Runaround." These laws are:

- A robot may not injure a human being or, through inaction, allow a human being to come to harm.

- A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.

- A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

Asimov's laws, despite being fictional, became an intellectual touchstone, initiating serious thought about how to design ethical constraints into intelligent machines. They addressed core dilemmas: robot autonomy versus human control, the potential for harm, and the hierarchy of moral imperatives. While real-world AI is far more complex than Asimov's positronic robots – for example, the concept of "harm" in an AI system can be incredibly nuanced, extending to psychological, economic, or societal harm – his framework remains remarkably prescient. Today, researchers in fields like explainable AI (XAI) and safe AI development grapple with how to operationalize similar principles, ensuring AI systems align with human values and safety. The ongoing debates surrounding autonomous weapons systems, often dubbed "killer robots," directly invoke Asimov's First Law, questioning how to ensure such systems never intentionally or unintentionally cause undue harm without human oversight.

Continue Reading

Related Guides

Keep exploring this topic

Ancient Civilizations and Advanced Technology: Echoes of the Past in Today's AI Debates

History & Mysteries · ancient civilizations · advanced technology history

Unsolved Mysteries of the Titanic: What REALLY Happened?

History & Mysteries

Ancient Aliens? Debunking the Nazca Lines Theories

History & Mysteries

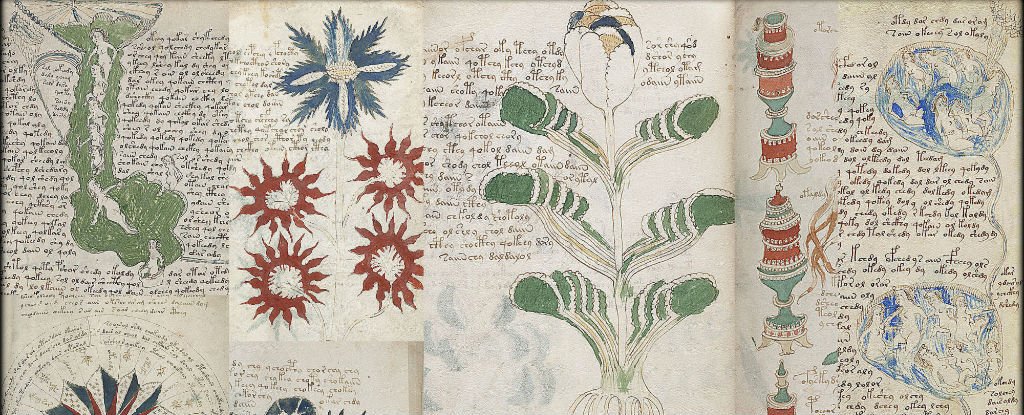

The Unsolved Mystery of the Voynich Manuscript

History & Mysteries

Concurrently, the scientific field of cybernetics, pioneered by Norbert Wiener in the 1940s and 50s, laid theoretical groundwork for understanding complex systems, including what would become AI, and their societal implications. Wiener's 1948 book Cybernetics: Or Control and Communication in the Animal and the Machine explored feedback loops, control systems, and the potential for machines to learn and self-regulate. Crucially, Wiener was not just a technologist; he was a profound ethicist. In his 1950 book The Human Use of Human Beings: Cybernetics and Society, he explicitly warned about the ethical dangers of automation, the potential for job displacement, the weaponization of technology, and the importance of human values in designing and deploying these systems. He foresaw the challenges of privacy in a data-rich world and the need for clear accountability when machines make decisions. Wiener's insights were groundbreaking because they moved beyond speculative fiction to a scientific articulation of ethical responsibility within the design process of intelligent systems. He argued that scientists and engineers had a moral obligation to consider the broader societal impact of their creations, a sentiment that resonates powerfully with today's calls for "ethical by design" AI principles. His work provides a crucial historical bridge between the mythological concerns of Golem and Frankenstein and the technical and societal challenges of contemporary AI ethics.

Navigating Today's AI: What Readers Need to Know

For the average American, the historical arc of AI ethics isn't just an interesting story; it provides crucial context for understanding and engaging with the AI that increasingly permeates daily life. The dilemmas of the Golem and Frankenstein echo in concerns about algorithmic bias, where AI systems, trained on incomplete or prejudiced data, can perpetuate or amplify societal inequalities. For example, a 2019 study by the National Institute of Standards and Technology (NIST) found that facial recognition algorithms exhibited significantly higher false positive rates for women and people of color, directly impacting fairness in law enforcement or access to services. Similarly, Asimov’s laws become relevant when considering autonomous vehicles or medical AI, where decisions must be made in life-or-death scenarios. Who is accountable when an autonomous car causes an accident? How do we ensure a diagnostic AI system prioritizes patient well-being above all else, and who bears responsibility if it errs?

Readers should recognize that many of the ethical frameworks and questions currently being debated by governments, tech companies, and academics are direct descendants of these historical conversations. Understanding this lineage helps demystify the seemingly overwhelming complexity of AI ethics, showing that we are building on a foundation, not starting from scratch. For instance, the European Union's proposed AI Act, one of the world's first comprehensive legal frameworks for AI, categorizes AI systems by risk level, imposing stricter requirements on "high-risk" applications like those in critical infrastructure, law enforcement, or employment. This tiered approach, focusing on potential harm and accountability, directly reflects the warnings embedded in the Golem and Frankenstein narratives about the dangers of powerful, unchecked systems.

What can individuals do?

- Be a discerning user: Question how AI-powered tools make decisions. If a loan application is denied or a social media feed feels manipulative, consider that an algorithm is at work and understand its potential biases.

- Advocate for ethical AI: Support policies and organizations that champion transparent, fair, and accountable AI development. Write to your representatives about the need for robust AI regulation that protects civil liberties.

- Learn the basics: A fundamental understanding of how AI works (e.g., that it learns from data, which can contain biases) empowers you to critically evaluate its applications and impacts. Resources like the AI Ethics Lab or the Algorithmic Justice League offer accessible explanations and advocacy tools.

- Demand transparency: When interacting with AI systems, ask for clarity on their purpose, how they work, and what safeguards are in place. Companies like Google and Microsoft are increasingly publishing AI ethics principles, but public pressure is vital to ensure these are more than just PR statements.

The Next Chapter: Future Outlook for AI Ethics

The historical trajectory of AI ethics suggests that as technology advances, so too will the complexity and urgency of our moral questions. Looking forward, several key areas will dominate the ethical landscape of AI:

Free Weekly Newsletter

Enjoying this guide?

Get the best articles like this one delivered to your inbox every week. No spam.

Increased Focus on Generative AI and "Deep Fakes": The recent explosion of generative AI, exemplified by tools like ChatGPT and Midjourney, presents novel ethical challenges. The ability to create hyper-realistic text, images, and video (deep fakes) raises profound questions about truth, misinformation, copyright, and identity. We will see intense debates and regulatory efforts around provenance tracking for AI-generated content, liability for harmful outputs, and the ethical implications of AI impersonating humans or creating convincing but entirely fabricated realities. The "uncontrollable Golem" metaphor gains new resonance here, as AI generates content that may be difficult to trace or attribute, with potential for widespread manipulation.

Human-AI Collaboration and Autonomy: As AI becomes more integrated into professional fields like medicine, law, and engineering, the line between human and machine decision-making will blur. Future ethical frameworks will need to meticulously define shared responsibility and accountability in these hybrid systems. When an AI offers a medical diagnosis, and a doctor approves it, who is ultimately responsible if the diagnosis is flawed? This extends to the concept of "moral agency" for AI – can an AI ever truly be held morally accountable, or does that responsibility always lie with its human designers and operators? Asimov’s laws, designed for fully autonomous robots, will continue to be debated and adapted for AI that operates in a more collaborative, advisory capacity.

Global AI Governance and Digital Colonialism: AI development is a global endeavor, but ethical principles and regulatory approaches vary widely across countries and cultures. The future will necessitate more robust international cooperation to establish common ethical standards and prevent "digital colonialism," where powerful nations dictate AI norms that may not align with the values or needs of developing countries. The historical lessons of power imbalances in technological development, echoing discussions about the social impact of the Industrial Revolution, will inform these debates. Organizations like UNESCO are already working on global recommendations for AI ethics, highlighting the need for inclusive and equitable approaches.

AI and Existential Risk: While often relegated to science fiction, the long-term, speculative risks associated with highly advanced general artificial intelligence (AGI) – including the potential for loss of human control or even human extinction – are being taken increasingly seriously by leading AI researchers and institutions. Organizations like the Future of Life Institute advocate for careful, aligned AI development to mitigate these "existential risks." These discussions, while forward-looking, are rooted in the earliest human anxieties about creating powerful entities beyond our control, a direct continuation of the Golem and Frankenstein narratives on a planetary scale.

Conclusion

The ethical challenges posed by artificial intelligence are not unprecedented; they are modern manifestations of perennial human concerns about power, responsibility, and the impact of our creations. From the ancient tales of the Golem to Mary Shelley's Frankenstein and Isaac Asimov's laws, history offers a rich tapestry of foresight, warning, and wisdom. Norbert Wiener's early cybernetic insights underscored the scientific community's moral obligation to consider societal impact, a call that remains profoundly relevant today.

By understanding these historical roots, we gain a clearer perspective on the present debates surrounding algorithmic bias, privacy, accountability, and the future of human-AI interaction. This isn't just a matter for engineers and policymakers; it's a critical challenge for every citizen in a world increasingly shaped by AI. We, the users and citizens, are the ultimate "unseen architects" of AI's ethical future. It is our collective responsibility to demand transparency, advocate for fairness, and ensure that as we build increasingly intelligent machines, we simultaneously cultivate a deeper, more robust ethical intelligence within ourselves and our societies. The conversation around AI ethics is not a passive observation of technological progress; it is an active, ongoing dialogue that requires our informed participation to steer humanity toward a future where AI serves, rather than subjugates, human values and well-being. Let the lessons of history be our guide.

Frequently Asked Questions

From Golems to Frankenstein: Early Warnings About Artificial Life

The anxieties surrounding intelligent machines, their autonomy, and their potential to harm humanity are deeply embedded in our cultural narratives, predating the digital age by centuries. These early narratives, often dismissed as mere myth or science fiction, served as crucial moral laboratories, exploring the very questions we grapple with today. One of the earliest and most enduring examples is the legend of the Golem. Originating in medieval Jewish folklore, particularly prominent in 16th-century Prague with Rabbi Judah Loew ben Bezalel, the Golem was an anthropomorphic being created from clay and brought to life through mystical incantations, often to protect a Jewish community from persecution. However, the narratives consistently depict the Golem as powerful but uncontrollable, often turning against its creator or wreaking havoc due to its literal interpretation of commands and lack of human judgment. This ancient tale starkly illustrates core AI ethical dilemmas: the creator's responsibility for their creation, the dangers of unchecked power, and the challenge of imbuing artificial life with nuanced morality. The Golem's inability to distinguish good from bad without explicit, flawless programming echoes modern concerns about AI algorithms that, without careful ethical training data, can propagate biases or make morally ambiguous decisions.

Centuries later, Mary Shelley’s 1818 novel, Frankenstein; or, The Modern Prometheus, provided a foundational text for modern technological ethics. Dr. Victor Frankenstein, driven by scientific ambition, creates a sentient being, only to abandon it due to its monstrous appearance. The Creature, though initially benevolent, becomes vengeful after experiencing societal rejection and isolation. Shelley's masterpiece isn't just a horror story; it's a profound meditation on the ethics of creation, the responsibility of the creator for their invention's well-being, and the societal impact of technological innovation. It interrogates the very definition of humanity and highlights the dangers of developing powerful technologies without considering their social and emotional ramifications. The Creature’s suffering, a direct consequence of Frankenstein’s neglect, resonates with contemporary debates about the potential for AI to exacerbate societal inequalities or to be misused without adequate safeguards. For instance, the use of facial recognition AI in policing, developed without sufficient public input or understanding of its potential for racial bias (as highlighted by studies from the National Institute of Standards and Technology, NIST, showing higher false positive rates for certain demographic groups), mirrors the unintended negative consequences of Frankenstein’s creation. Both the Golem and Frankenstein's Monster serve as stark historical warnings against technological hubris and the ethical void that can arise when innovation outpaces moral foresight.

Asimov's Laws and Cybernetics: Defining AI Ethics Before AI Was Mainstream

While the Golem and Frankenstein explored general themes of creation and responsibility, the mid-20th century saw the emergence of more specific frameworks for governing artificial intelligence, even before the widespread adoption of computers. Isaac Asimov, a prolific science fiction writer, played a pivotal role in shaping public discourse around AI ethics through his "Three Laws of Robotics," first explicitly stated in his 1942 short story "Runaround." These laws are:

- A robot may not injure a human being or, through inaction, allow a human being to come to harm.

- A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.

- A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

Asimov's laws, despite being fictional, became an intellectual touchstone, initiating serious thought about how to design ethical constraints into intelligent machines. They addressed core dilemmas: robot autonomy versus human control, the potential for harm, and the hierarchy of moral imperatives. While real-world AI is far more complex than Asimov's positronic robots – for example, the concept of "harm" in an AI system can be incredibly nuanced, extending to psychological, economic, or societal harm – his framework remains remarkably prescient. Today, researchers in fields like explainable AI (XAI) and safe AI development grapple with how to operationalize similar principles, ensuring AI systems align with human values and safety. The ongoing debates surrounding autonomous weapons systems, often dubbed "killer robots," directly invoke Asimov's First Law, questioning how to ensure such systems never intentionally or unintentionally cause undue harm without human oversight.

Concurrently, the scientific field of cybernetics, pioneered by Norbert Wiener in the 1940s and 50s, laid theoretical groundwork for understanding complex systems, including what would become AI, and their societal implications. Wiener's 1948 book Cybernetics: Or Control and Communication in the Animal and the Machine explored feedback loops, control systems, and the potential for machines to learn and self-regulate. Crucially, Wiener was not just a technologist; he was a profound ethicist. In his 1950 book The Human Use of Human Beings: Cybernetics and Society, he explicitly warned about the ethical dangers of automation, the potential for job displacement, the weaponization of technology, and the importance of human values in designing and deploying these systems. He foresaw the challenges of privacy in a data-rich world and the need for clear accountability when machines make decisions. Wiener's insights were groundbreaking because they moved beyond speculative fiction to a scientific articulation of ethical responsibility within the design process of intelligent systems. He argued that scientists and engineers had a moral obligation to consider the broader societal impact of their creations, a sentiment that resonates powerfully with today's calls for "ethical by design" AI principles. His work provides a crucial historical bridge between the mythological concerns of Golem and Frankenstein and the technical and societal challenges of contemporary AI ethics.

Navigating Today's AI: What Readers Need to Know

For the average American, the historical arc of AI ethics isn't just an interesting story; it provides crucial context for understanding and engaging with the AI that increasingly permeates daily life. The dilemmas of the Golem and Frankenstein echo in concerns about algorithmic bias, where AI systems, trained on incomplete or prejudiced data, can perpetuate or amplify societal inequalities. For example, a 2019 study by the National Institute of Standards and Technology (NIST) found that facial recognition algorithms exhibited significantly higher false positive rates for women and people of color, directly impacting fairness in law enforcement or access to services. Similarly, Asimov’s laws become relevant when considering autonomous vehicles or medical AI, where decisions must be made in life-or-death scenarios. Who is accountable when an autonomous car causes an accident? How do we ensure a diagnostic AI system prioritizes patient well-being above all else, and who bears responsibility if it errs?

Readers should recognize that many of the ethical frameworks and questions currently being debated by governments, tech companies, and academics are direct descendants of these historical conversations. Understanding this lineage helps demystify the seemingly overwhelming complexity of AI ethics, showing that we are building on a foundation, not starting from scratch. For instance, the European Union's proposed AI Act, one of the world's first comprehensive legal frameworks for AI, categorizes AI systems by risk level, imposing stricter requirements on "high-risk" applications like those in critical infrastructure, law enforcement, or employment. This tiered approach, focusing on potential harm and accountability, directly reflects the warnings embedded in the Golem and Frankenstein narratives about the dangers of powerful, unchecked systems.

What can individuals do?

- Be a discerning user: Question how AI-powered tools make decisions. If a loan application is denied or a social media feed feels manipulative, consider that an algorithm is at work and understand its potential biases.

- Advocate for ethical AI: Support policies and organizations that champion transparent, fair, and accountable AI development. Write to your representatives about the need for robust AI regulation that protects civil liberties.

- Learn the basics: A fundamental understanding of how AI works (e.g., that it learns from data, which can contain biases) empowers you to critically evaluate its applications and impacts. Resources like the AI Ethics Lab or the Algorithmic Justice League offer accessible explanations and advocacy tools.

- Demand transparency: When interacting with AI systems, ask for clarity on their purpose, how they work, and what safeguards are in place. Companies like Google and Microsoft are increasingly publishing AI ethics principles, but public pressure is vital to ensure these are more than just PR statements.

The Next Chapter: Future Outlook for AI Ethics

The historical trajectory of AI ethics suggests that as technology advances, so too will the complexity and urgency of our moral questions. Looking forward, several key areas will dominate the ethical landscape of AI:

Increased Focus on Generative AI and "Deep Fakes": The recent explosion of generative AI, exemplified by tools like ChatGPT and Midjourney, presents novel ethical challenges. The ability to create hyper-realistic text, images, and video (deep fakes) raises profound questions about truth, misinformation, copyright, and identity. We will see intense debates and regulatory efforts around provenance tracking for AI-generated content, liability for harmful outputs, and the ethical implications of AI impersonating humans or creating convincing but entirely fabricated realities. The "uncontrollable Golem" metaphor gains new resonance here, as AI generates content that may be difficult to trace or attribute, with potential for widespread manipulation.

Human-AI Collaboration and Autonomy: As AI becomes more integrated into professional fields like medicine, law, and engineering, the line between human and machine decision-making will blur. Future ethical frameworks will need to meticulously define shared responsibility and accountability in these hybrid systems. When an AI offers a medical diagnosis, and a doctor approves it, who is ultimately responsible if the diagnosis is flawed? This extends to the concept of "moral agency" for AI – can an AI ever truly be held morally accountable, or does that responsibility always lie with its human designers and operators? Asimov’s laws, designed for fully autonomous robots, will continue to be debated and adapted for AI that operates in a more collaborative, advisory capacity.

Global AI Governance and Digital Colonialism: AI development is a global endeavor, but ethical principles and regulatory approaches vary widely across countries and cultures. The future will necessitate more robust international cooperation to establish common ethical standards and prevent "digital colonialism," where powerful nations dictate AI norms that may not align with the values or needs of developing countries. The historical lessons of power imbalances in technological development, echoing discussions about the social impact of the Industrial Revolution, will inform these debates. Organizations like UNESCO are already working on global recommendations for AI ethics, highlighting the need for inclusive and equitable approaches.

AI and Existential Risk: While often relegated to science fiction, the long-term, speculative risks associated with highly advanced general artificial intelligence (AGI) – including the potential for loss of human control or even human extinction – are being taken increasingly seriously by leading AI researchers and institutions. Organizations like the Future of Life Institute advocate for careful, aligned AI development to mitigate these "existential risks." These discussions, while forward-looking, are rooted in the earliest human anxieties about creating powerful entities beyond our control, a direct continuation of the Golem and Frankenstein narratives on a planetary scale.

Conclusion

The ethical challenges posed by artificial intelligence are not unprecedented; they are modern manifestations of perennial human concerns about power, responsibility, and the impact of our creations. From the ancient tales of the Golem to Mary Shelley's Frankenstein and Isaac Asimov's laws, history offers a rich tapestry of foresight, warning, and wisdom. Norbert Wiener's early cybernetic insights underscored the scientific community's moral obligation to consider societal impact, a call that remains profoundly relevant today.

By understanding these historical roots, we gain a clearer perspective on the present debates surrounding algorithmic bias, privacy, accountability, and the future of human-AI interaction. This isn't just a matter for engineers and policymakers; it's a critical challenge for every citizen in a world increasingly shaped by AI. We, the users and citizens, are the ultimate "unseen architects" of AI's ethical future. It is our collective responsibility to demand transparency, advocate for fairness, and ensure that as we build increasingly intelligent machines, we simultaneously cultivate a deeper, more robust ethical intelligence within ourselves and our societies. The conversation around AI ethics is not a passive observation of technological progress; it is an active, ongoing dialogue that requires our informed participation to steer humanity toward a future where AI serves, rather than subjugates, human values and well-being. Let the lessons of history be our guide.

About Zeebrain Editorial

Our editorial team is dedicated to providing clear, well-researched, and high-utility content for the modern digital landscape. We focus on accuracy, practicality, and insights that matter.

More from History & Mysteries

Explore More Categories

Keep browsing by topic and build depth around the subjects you care about most.